The Hidden Cost of False Positives

Your dashboard may look clean if alerts are filtered by human review before they hit a manager’s queue. But drivers still live with what fires in the cab. If those alerts are wrong, your safety program is not preventing incidents, it is just documenting them.

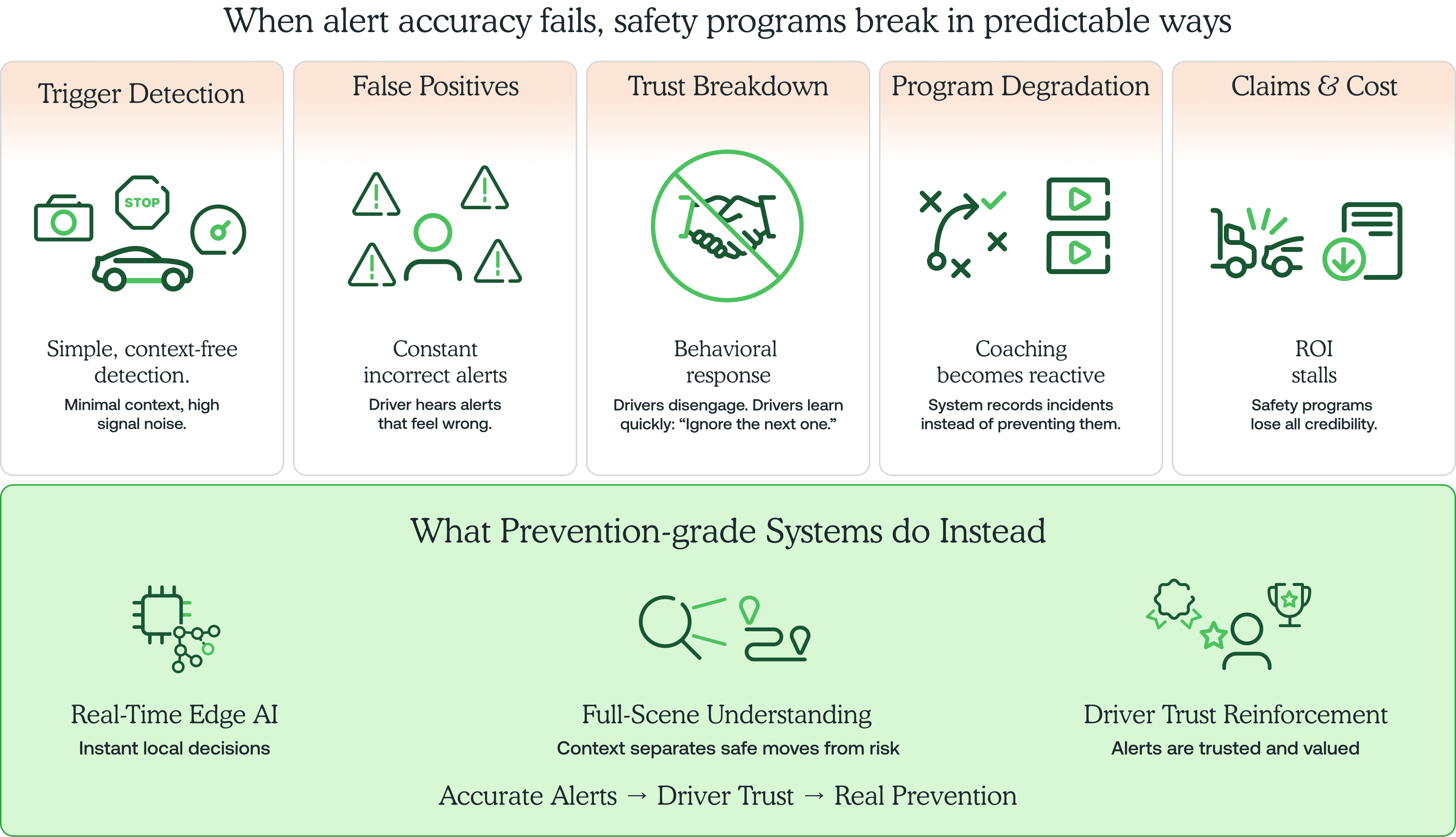

False in-cab alerts teach a fast lesson: ignore the next one.

It does not take many. A driver gets flagged for braking to avoid a cut-off. Another gets coached for a stop sign that was not in their lane. A third hears an alert during a clean maneuver. Each one chips away at credibility. After enough of them, the system becomes background noise. Drivers stop responding. And the automated coaching that was supposed to prevent incidents stops working.

That is the hidden cost of false positives.

Prevention only works when the alert is right in the moment. That takes prevention-grade accuracy built on full-scene understanding, so the system can tell a safe move from a risky one. That is the standard Netradyne is built for, so drivers trust the system and managers spend less time sorting noise.

Why so many programs end up here

Most fleet platforms did not start as safety systems. They started with tracking, compliance, and operations. Cameras and AI coaching were added later. That history shapes how the systems work. Detection models built for operational visibility behave very differently from models designed to coach a driver in real time.

The thresholds are simpler. The context is thinner. And the false positive rate reflects the difference.

When those alerts generate too much noise, vendors often route events through human reviewers before they reach the safety manager. The dashboard stays clean. The coaching queue looks manageable. But the driver never sees that cleanup.

If a system cannot deliver accurate alerts in real time, without a human in the loop, it is not a prevention tool. It is a recording device with a speaker.

Two traps that look different but end the same way

Knowing this, vendors have landed in two places. Neither solves the problem.

- The first trap: the vendor filters events before they reach the manager, but still fires alerts immediately in the cab. The coaching queue looks clean. The driver experience is still noisy. Managers think the system is performing. Drivers know it isn't. Buy-in dies quietly, one bad alert at a time.

- The second trap: the vendor delays alerts until after human review to protect in-cab accuracy. The alerts are more accurate, but they're no longer real time. The moment to change behavior before an incident is gone.

Either way, if accuracy depends on a human after the event, you don't have a prevention program. You have a better way to file claims.

Prevention starts with a driver who trusts what they hear

Netradyne was built differently. Not cameras added to a fleet platform. A safety AI platform from day one, with more than 28 billion miles of commercial driving data behind it. That foundation is what makes prevention-grade accuracy possible.

Prevention-grade means one thing: a driver hears an alert and trusts it enough to act on it. Not eventually. In the moment.

Here's what it takes to get there.

- The alert has to fire at the right time

A system that uploads video, waits for cloud processing, or queues an event for human review has already missed the prevention window. The coaching moment is measured in sub-seconds. Miss it and you have a recording. You don't have prevention.

A system that can't suppress weak signals in real time doesn't just miss the window. It hijacks the driver's attention with noise, which is its own form of failure.

The answer: Edge Intelligence

We run our core AI models directly on the Driver•i device, at the edge. There is no cloud roundtrip for real-time decisions. On-board inference resolves risk determinations in sub-second loops, with no dependency on connectivity. This matters most exactly where fleets can't afford gaps: remote routes, tunnels, dead zones, and depot environments where cellular coverage is unreliable.

- The system must understand scene context, not just triggers

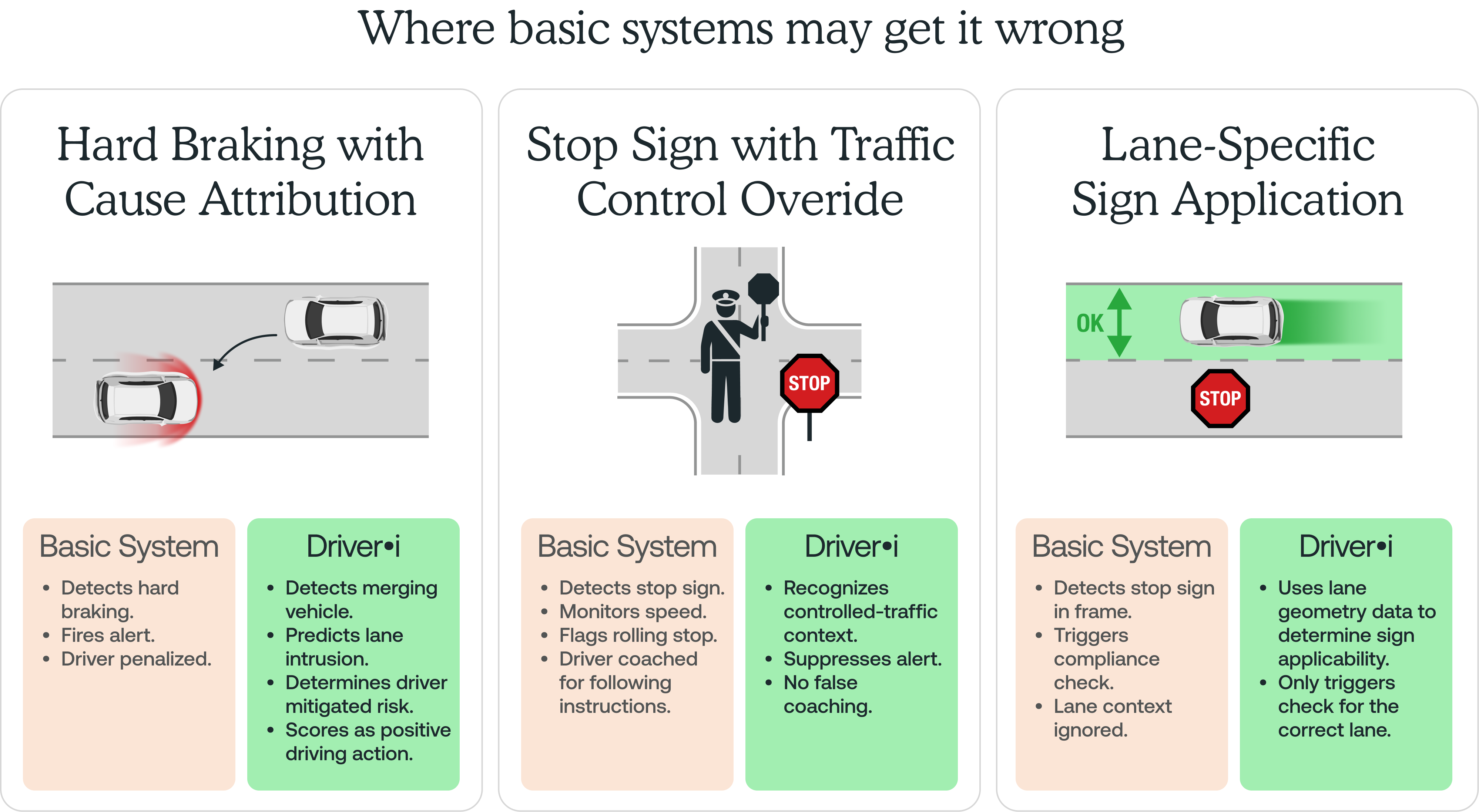

Most false positives come from simplistic detection: one sensor input, one threshold, one decision. Hard braking fires an alert. Short following distance fires an alert. A stop sign appears in frame and triggers a compliance check regardless of which lane it governs.

Real roads don't work that way. A system that treats every trigger as equivalent will punish drivers who are doing exactly the right thing.

Consider what it takes to make reliable decisions in a dynamic driving environment. The perception stack that powers a fully autonomous vehicle doesn't watch for single events. It builds a continuous model of what is happening around the vehicle: object positions, predicted motion, lane geometry, signal states, and how those conditions are changing from moment to moment.

That level of scene intelligence is what separates a system that understands driving from one that merely records it.

Netradyne Driver•i technology applies the same class of perception intelligence. The difference is the output. Instead of controlling the vehicle, it coaches the driver.

The answer: Netradyne AI architecture

Netradyne Driver•i uses a patented multi-task neural network architecture to build a continuous model of the driving scene. The network runs parallel processing branches from a shared computational trunk: one branch handles object detection, classifying vehicles, pedestrians, traffic signals, and signs. A separate branch detects and parameterizes lane boundaries and road geometry, computing the likelihood that a curve or lane edge is present at a given location and localizing it precisely if found.

These branches don't operate in isolation. Driver•i combines object positions, vehicle motion, lane geometry, and how conditions are changing over time into a single scene understanding. That's what allows the system to determine not just that something happened, but why.

- The system must recognize what drivers do right

If every alert is a correction, drivers conclude the system is built against them. Recognition of safe behavior isn't the absence of a bad event. It requires the same causal intelligence as risk detection: the system must determine that the driver made a deliberate, skilled choice.

The answer: GreenZone Score

We do this with our proprietary GreenZone Score to continuously track and rewards specific, context-verified driving behaviors: creating space for a merging vehicle, maintaining safe following distance under dynamic conditions, clean stop sign compliance, and sustained speed consistency. Because Driver•i analyzes 100% of drive time with full scene context, it can distinguish a deliberate protective action from a passive absence of violation.

The numbers bear this out. Drivers who reach a GreenZone Score of 950 or above are twice as likely to avoid a collision.* A 50-point score increase correlates with a 12 to 15 percent reduction in collision rates.** These aren't engagement metrics. They're safety outcomes tied directly to the behavioral model the scoring system reinforces.

The result: a program that prevents, not just records

A safety program only improves outcomes when three things are true: drivers trust the alerts, managers coach fewer moments with higher impact, and recognition reinforces safe driving instead of creating resentment.

Fleets running Netradyne report the results of that dynamic: fewer incidents, lower claims costs, and drivers who trust the system enough to respond when it alerts.

See how fleets are applying this approach in real operations. Request a demo.

* Based on customer data.

** Individual results and conditions may vary.